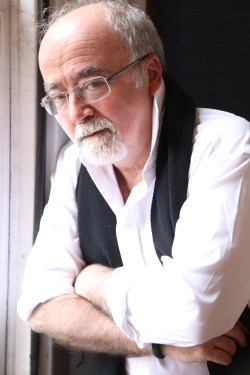

Talking Headlines: with Professor Jim Coyne

James Coyne is Emeritus Professor of Psychology in Psychiatry at the University of Pennsylvania. He is also Director of Behavioral Oncology at the Abramson Cancer Centre and Senior Fellow at the Leonard Davis Institute of Health Economics. His main area of interest is health psychology and depression. Professor Coyne’s work in psychology and psychiatry is consistently cited for its impact. Jim is also an active blogger, confronting editorial practices and media science reports that favour sensationalism at the expense of scientific content. Jim is currently visiting Scotland as a 2015 Carnegie Centenary Visiting Professor at the University of Stirling.

Jim, can you define a moment when you became so actively interested in science reporting?

As Director of Behavioral Oncology, I became aware of inaccurate portrayals in the media of psychological factors altering the course and outcome of cancer. Unrealistic stories tell of people overcoming cancer by sheer mind power. These tall tales do a lot of damage. Not only do they give people false hope, they provide the message that persons with cancer have only themselves to blame if they did not obtain better outcomes by adopting the right attitude and showing more fight. But people with cancer who have a fighting spirit die at the same rate as those who don’t.

My research also clearly showed that psychotherapy and support groups, whatever other benefits they might have, do not affect the course or outcome of cancer.

But promoters persist in making unrealistic claims that the media eagerly picked up. These claims set the stage for marketing other exploitative quackery and for patients not settling for the limits of what conventional, evidence-based treatments can provide.

Is there a particular type of bad reporting that triggers your blog writing?

Stories that get people worried without good reason about things like vaccines, fluoridation, or cell phones. One recent story reported that drinking four fizzy soft drinks (but, oddly, not those without carbonation) shortened your life span as much as smoking. This prompted me to provide a guide to debunking such claims.

I also get upset by stories that suggest yet another human element of healthcare can be eliminated by reliance on unproven technology – like a simple blood test for depression that can substitute for a professional taking the time to talk with a patient and reach a diagnosis. We’re way off from having such a test available in clinical settings that is reliable and valid.

A lot of claims come from those who want to attract more resources for their work than are justified by available evidence. The Director of the US National Institute of Mental Health circulated rubbish claims that an adolescent having a depressed mother aged the young girl four years. So, we should devote research funds to finding out how to prevent or reverse this. I showed him much mercy when I demonstrated how to take this claim apart.

Journalists often churnal these kind of sensational stories. They simply copy a press release and pass it off as the result of actually researching the story themselves.

Misleading stories are fair game for a critical blog post, but there is so much bad stuff out there and so little time to blog.

In the UK, we are noticing science reporting is actually getting better with some very good coverage at times. Do you think the same can be said for other countries, the US for example?

I’ve only been over in the UK for short while, but I haven’t been impressed by the quality of scientific or biomedical reporting. The BBC headlined authors’ claims that psychotherapy had been shown to be as effective as medication for schizophrenia and then had to change the headline when criticism appeared in the blogosphere. The Guardian passed on authors’ claims of an inexpensive genetic test for depression in pregnant women, and had to be corrected by NHS Choices.

We have an international problem on our hands. As competition has tightened, getting attention is more important than being accurate. The media – just like the scientific journals – are more interested in novelty and claims of breakthroughs, even when they know that such claims cannot be sustained.

Do you think health-related subjects are more easily affected by bad reporting?

I think claims that people can do something that will improve their health or extend their lives have always been newsworthy. People seek concrete suggestions and tools they can apply themselves. They are willing to ignore that the advice that they are getting contradicts what they were just told a short while ago.

I don’t know – and here I may be getting myself in trouble – but I think in the UK, there’s more willingness to see a lack of personal wellness as a moral fault. There is certainly more of a nannyism by which people willing to permit the government to put restrictions on, say, fast food outlets, when there is a lack of strong evidence that having them around is a major source of obesity. I think it becomes a distraction from looking for things that will really work – like playgrounds and safe bike lanes.

Is there a reason why we all like reading in the news that X causes Y?

Science reporting serves us this up because that’s what we want. Claims that something cause something else are much more exciting than more sober assessments that something is associated with something else and might be causal. We can try to impress the public that correlation is not necessarily causality, but we’re no more successful than in discouraging researchers from making causal claims based on correlations in the first place.

Just recently I saw a lot of attention being given to the Los Angeles Times story that drinking coffee cancels the effects of drinking alcohol on your health. No one had done a clinical trial where drinkers randomly got assigned to getting coffee or not, in addition to alcoholic drinks. Some researchers had only applied complex statistics to a large data set in which there was information about drinking both coffee and drinking alcohol. I’m sure that different statistical analyses conducted with different assumptions would not have produced such results.

Paper retraction is not uncommon for high profile journals. Do you think editors might overlook methodological flaws to favour stories that could make the headlines?

I don’t think that editors of the so-called vanity journals deliberately publish junk science. But there’s ample evidence of them being distracted from questions of whether particular findings can be reproduced in independent research. The prestigious high impact journals have higher rates of having to retract stories, but it doesn’t seem to affect their willingness to publish outrageous stories in the first place. There is a serious failure of peer review before publication in such journals.

Finally, what is the best tip you would give us for the next time we read that a cause for autism, or a diet preventing cancer, have been found?

Take a deep breath and remember that lots of claims like this have come before, only to be forgotten when replaced by the next distorted claim. Real breakthroughs occur infrequently, and most claims soon prove exaggerated or simply false.

Don’t depend on media coverage based on single studies. It is quite likely that if a story is that important, it will be echoed and re-examined in the social media. Wait and see how it fares there.

Social media, ranging from Twitter to blogs, are becoming an extremely important way of independently evaluating claims that may have slipped into the media simply on the basis of being attention-grabbing. Independent review in the social media has really come into its own. I invite everybody to find a few trusted sources to filter and interpret what they read elsewhere.

Related

From → News Stories, Talking Headlines

Reblogged this on Quick Thoughts and commented:

My interview with Silvia Parracchini